User Agents For Scraping

Introduction

In the realm of web scraping, user agents play a crucial role in how requests are perceived by web servers. A user agent is a string of text that a web browser or a bot sends to a server to identify itself. This identification helps the server determine how to respond to the request. For web scraping, effectively managing user agents can significantly enhance the success rate of data extraction efforts.

Understanding User Agents

User agents are essential for distinguishing between different types of web clients. They provide information such as the browser type, operating system, and device being used. For instance, a request from a Chrome browser on Windows will have a different user agent string compared to a request from a mobile Safari browser. This differentiation is vital for web servers that implement measures to block automated scraping attempts.

Importance of User Agents in Scraping

When scraping websites, using a standard user agent string can help mask the scraping tool as a legitimate browser. This can prevent the server from blocking the request based on the detection of a bot. However, merely changing the user agent string is not sufficient, as many websites employ additional techniques to identify and block scraping activities.

Techniques for Managing User Agents

To effectively manage user agents during web scraping, several techniques can be employed:

- Rotating User Agents: Regularly changing the user agent string used in requests can help avoid detection. This can be done manually or through automated scripts.

- Using a Web Scraping API: Services like ZenRows offer features such as auto-rotating user agents, which can simplify the process of managing user agents and reduce the risk of being blocked.

- Custom User Agents: Creating custom user agent strings that mimic popular browsers can further enhance the chances of successful scraping.

- Monitoring Response Headers: Analyzing the response headers from the server can provide insights into whether the user agent is being accepted or rejected.

Challenges in User Agent Management

Despite the advantages of managing user agents, challenges remain. Some websites utilize advanced techniques such as fingerprinting, which can identify bots even when user agents are rotated. Additionally, certain sites may employ rate limiting or CAPTCHA challenges that can hinder scraping efforts regardless of user agent management.

Best Practices for Effective Scraping

To maximize the effectiveness of web scraping while managing user agents, consider the following best practices:

- Combine Techniques: Use a combination of rotating user agents and proxies to further obscure the scraping activity.

- Respect Robots.txt: Always check the website's robots.txt file to understand the scraping policies and avoid legal issues.

- Limit Request Frequency: To avoid triggering anti-bot measures, limit the frequency of requests made to the server.

- Test and Adapt: Continuously test different user agents and adapt strategies based on the responses received from the server.

Conclusion

User agents are a fundamental aspect of web scraping that can significantly influence the success of data extraction efforts. By understanding how to effectively manage user agents, scrapers can enhance their ability to navigate the complexities of web servers and improve their overall scraping efficiency. Employing best practices and utilizing advanced tools can further mitigate the challenges associated with scraping, leading to more successful outcomes.

Get Ready to Run: Coastal Delaware Running Festival Marathon

Get Ready to Run: Coastal Delaware Running Festival Marathon

Health

Health  Fitness

Fitness  Lifestyle

Lifestyle  Tech

Tech  Travel

Travel  Food

Food  Education

Education  Parenting

Parenting  Career & Work

Career & Work  Hobbies

Hobbies  Wellness

Wellness  Beauty

Beauty  Cars

Cars  Art

Art  Science

Science  Culture

Culture  Books

Books  Music

Music  Movies

Movies  Gaming

Gaming  Sports

Sports  Nature

Nature  Home & Garden

Home & Garden  Business & Finance

Business & Finance  Relationships

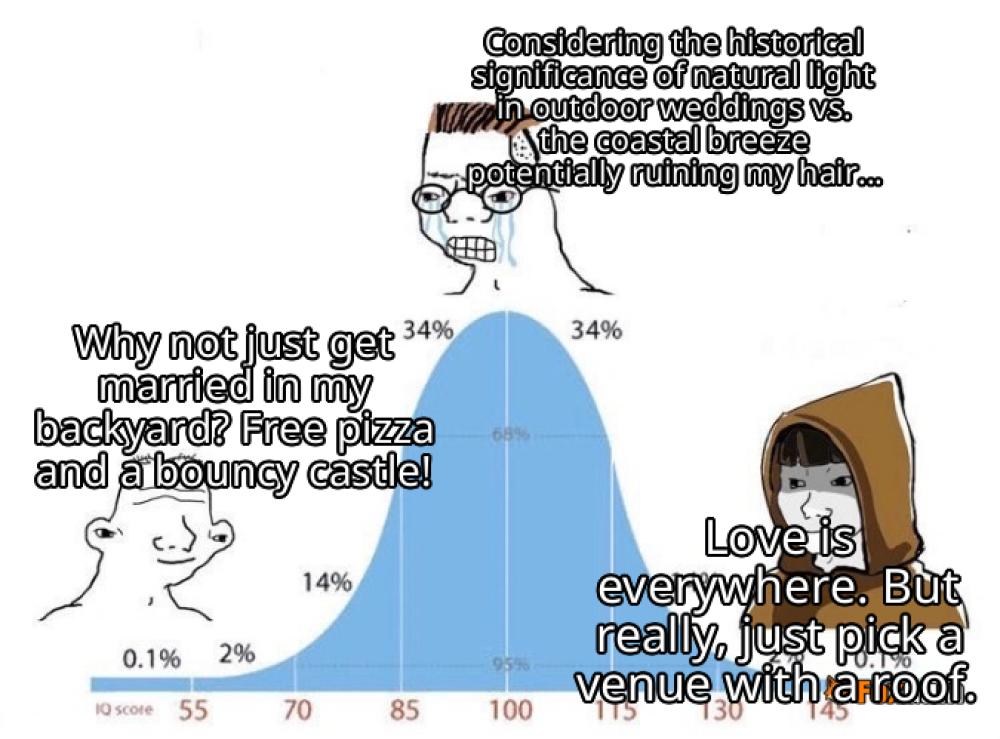

Relationships  Pets

Pets  Shopping

Shopping  Mindset & Inspiration

Mindset & Inspiration  Environment

Environment  Gadgets

Gadgets  Politics

Politics